I Don't Use AI Because I Believe in Consent

by Tatiana O'Toole • About 7 min read reading time

![]()

This is a dark and very personal story. I share it because I want there to be no confusion about my stance on AI and why it is so staunchly against the technology.

It's going to be discussing consent and the psychological impact of when consent isn't respected. It’s also going to talk about child sexual abuse.

Please proceed with caution for your own mental health.

-

I spent a good chunk of my life being told that my consent did not matter. Between the ages of 12 and 18, I was molested, groomed, assaulted and raped by multiple people. I coped using drugs and alcohol starting around age 15.

In 2015, I was raped again, and I finally broke. I didn’t leave my house, I stopped caring for myself, and I slipped into a weed fueled numbness so the constant oscillation between euphoria to suicidal depression would just stop.

In 2016, after recognizing how bad my mental health had gotten, I reached out to the online creative communities to try to make art again. I needed purpose.

It was these communities who helped me recover. If you chatted with me during this period of recovery, thank you- your friendship and kindness changed my life in ways you cannot begin to understand.

It was because of the people in the art and design communities and their passion for their work that I started to share my own work. I expressed opinions. Reevaluated things I was taught. Saw my past and future in a new light. Unlearned a lot of toxic beliefs. Stepped outside and made friends again. Spoke with diverse and fascinating people.

I made art, and -in return- my art remade me.

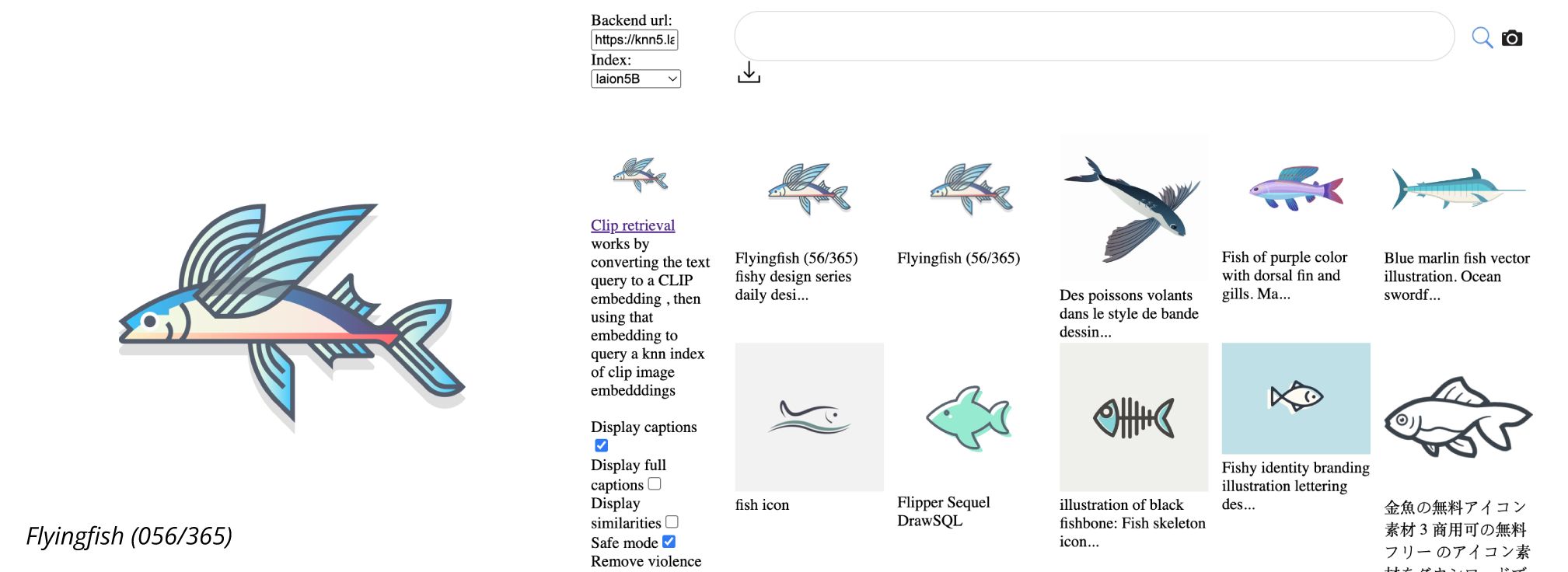

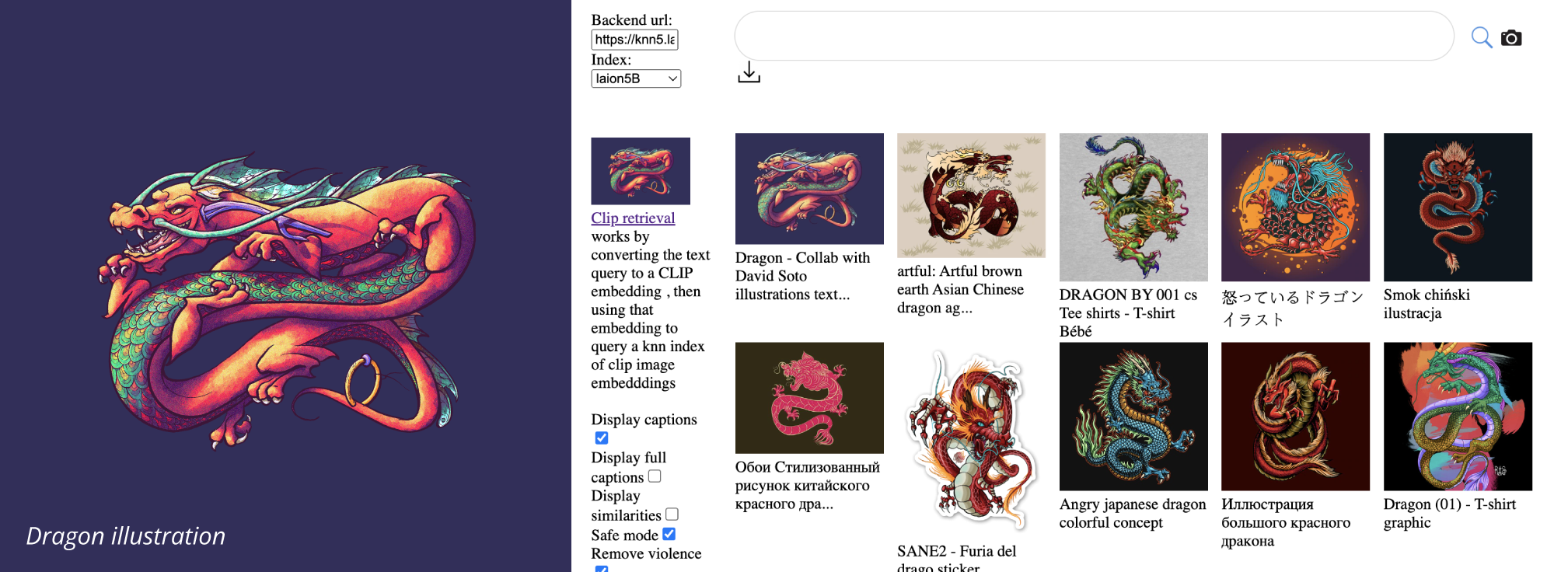

By the end of 2017, I was listed as one of Dribbble's top 50 liked designers on the platform. It was largely due to the extended art project where I illustrated something once a day, every day. What people and clients saw as my portfolio was actually a journey of survival and creating purpose after being broken. Adding to my portfolio was a reason for me to wake up the next day.

The people in the industry who encouraged me (and encourage me to this day) taught me how to use the tools that ended up saving my life.

Again, thank you.

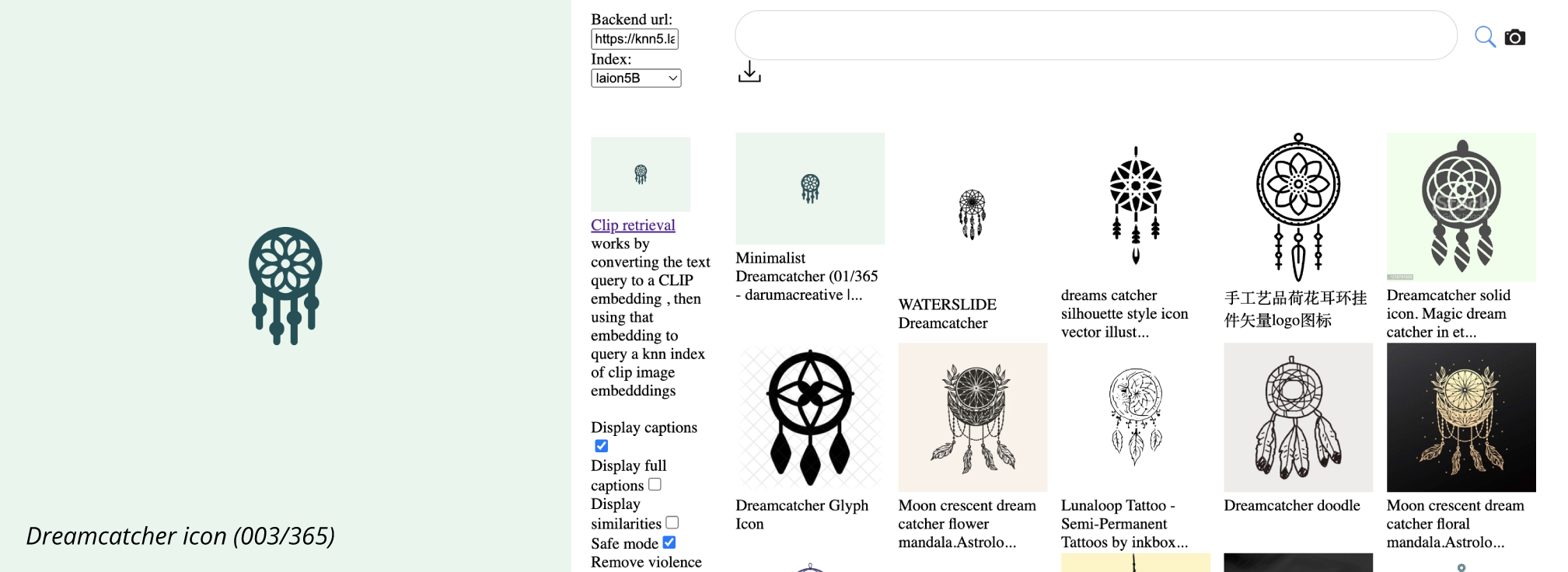

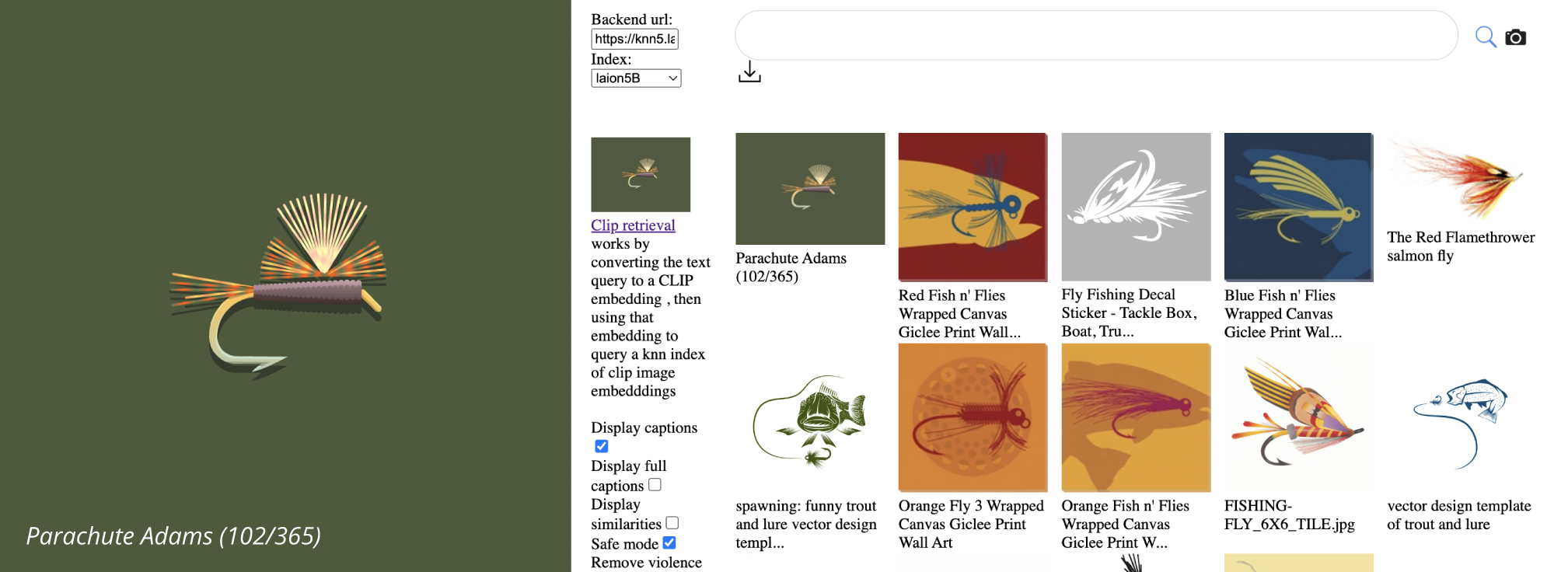

The LAION datasets scraped data from the Common Crawl (2014–2021) which contained my works (and yours) from Dribbble and other sites. I found a significant portion of my portfolio in their datasets in 2022.

My art that I made to survive and heal was taken without my consent, fed into AI models, and used to create a digital competitor that threatened my career, the careers of my community, and the careers of future artists and designers.

I found 96 pieces of my work in the LAION 5B dataset before I had to stop looking.

Devastated and freshly re-violated, I stopped sharing art for about three years. I blocked everything and everyone AI, got therapy, made art privately, and wrote a lot.

This year, I found out that the LAION datasets were so carelessly assembled that at least 3,226 child sexual abuse images were gathered alongside my work (and hundreds of millions of other artists', designers', animators', and creatives' portfolios) and fed into the largest LLMs/AI models available. LAION knew the possibility of such materials existing in their datasets and still chose to publish them. The founder, Christoph Schuhmann, was quoted as saying in an article by Bloomberg, "We could have filtered out violence from the data we released, but we decided not to because it will speed up the development of violence detection software." To be very clear, it is unethical and illegal to publicly host the images of sexual violence for anyone to view and download; justifying by way of innovation is insulting and damaging to the deceased and surviving victims. This is especially heinous when it is known that such materials can be and are used to generate AI CSAM.

Our work was blended together with this abuse, and the results were fed into machines that are currently being used to create further horrifying, new forms of abuse. Due to the hunger and drive to consume any and all available data in the development race of AI, it is more than certain that these datasets- along with the abusive materials within them- were fed into all the major AI products of today's world. On top of this, organizations fighting CSAM are trying to raise alarm bells that genAI technology is rapidly making their ability to identify, locate, and rescue children impossible.

For that reason alone, we should be ceasing all generative AI development. And yet, we only see acceleration in its use and development.

I made a promise to myself that I would do everything I could to never be sexually assaulted again. That means fighting for systemic change. Part of that work is personally recognizing and understanding what consent is and respecting it in all areas of life, something we all struggle with as humans.

Consent needs to be well informed, given freely, and able to be withdrawn without threat.

On top of their other crimes against humanity, tech is setting an atrocious precedent for ignoring consent to the global community by absorbing data en masse. This data collection requires consent and for the users to be fully aware of how their data will be used and by whom. They cannot do this because they cannot securely protect the data nor do they want to. Private information of individuals across the globe is the new oil and gold. Users have very little choice as they are forced to accept terms of service that demand relinquishing data privacy rights to allow for mass data training. We have no other options; companies have a monopolistic ownership of markets. If- by some miracle- a company arises that promises data privacy and protection, it is only a matter of time before they are hacked for their data, betray their users by changing their data privacy stance, or selling off to another less scrupulous company. This is coercion and entrapment.

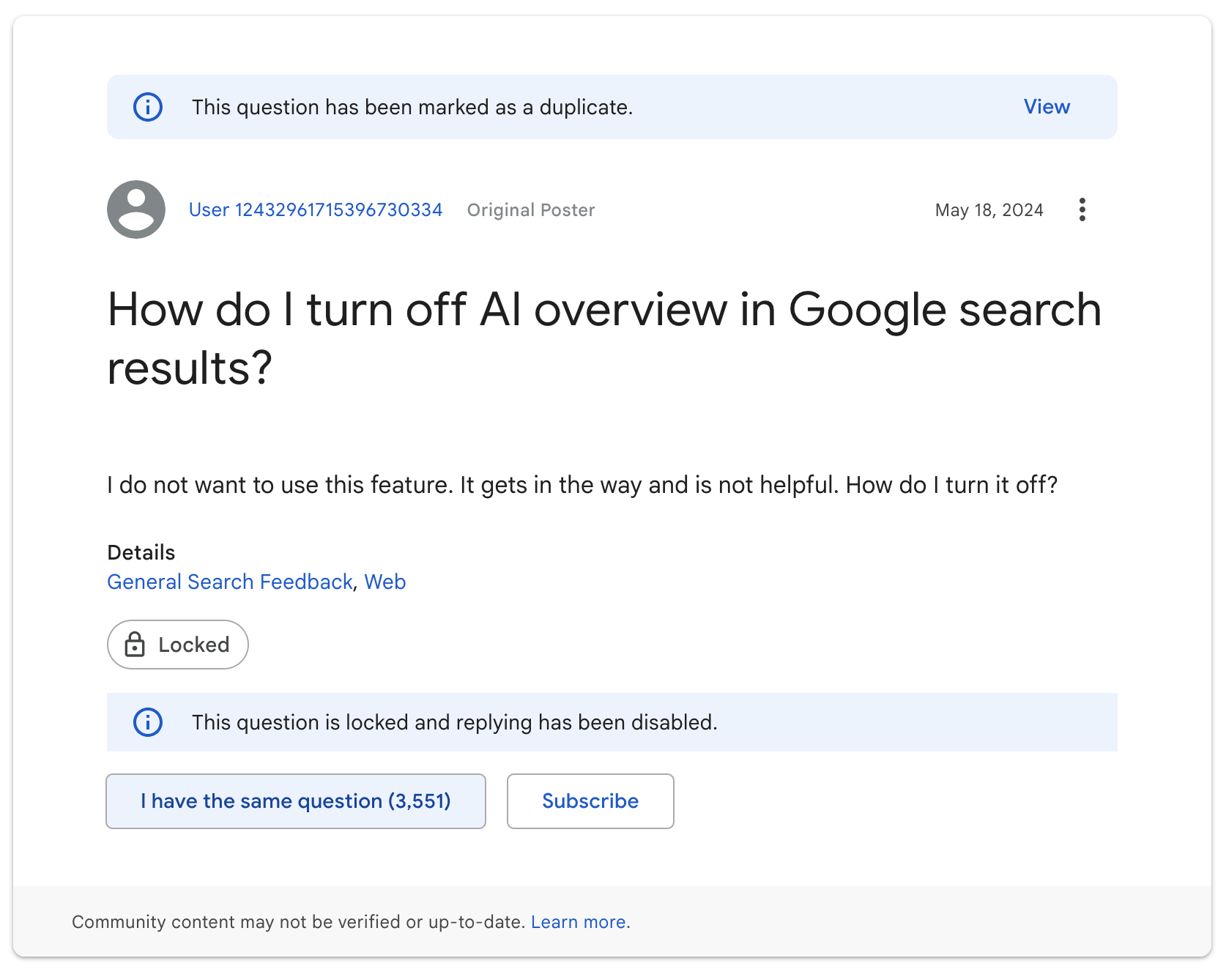

In addition, users are being force fed AI technology without their permission and no option to opt out. This is another violation of consent. This tech carries the baggage and cost of unethical actions and systems. Performing an AI bait n switch with platforms, products, and services that people previously consented to using is dishonest, deceitful, and a continuing dangerous precedent. This is not technology advancing and providing newer and better products instead of old ones- in fact, AI is making most of these services and products much worse. In reality, it is a forced scam in which users cannot escape.

With the accelerated generation and spread of sexual abuse images of children and the dwindling ability to rescue child victims, the gross violation of human rights in relation to data privacy especially with the sharp rise in authoritarianism globally, and the overbearing force feeding of AI to users across the board, I refuse to use this vile, putrid, and cursed technology.

I refuse to use AI because I believe in consent. I did not consent to my works being trained. I know my friends and loved ones in my community did not consent to have their works trained. I know that our creative siblings in writing and music communities did not consent. I know that the people who shared personal photos online did not knowingly consent. I know that the children and adults in the photos in mass datasets used by sexual predators and abusers were and are unable to consent.

Because I believe in consent, I will not use the tools that were built off their abuse and contribute to their abuse.

With this, I encourage you to reevaluate your personal relationship with consent and the decision on whether or not to use generative AI tools.

I will be building my business for and with collaborators and clients who have the same views.

- Tatiana